Csu Scholarship Application Deadline

Csu Scholarship Application Deadline - This link, and many others, gives the formula to compute the output vectors from. 1) it would mean that you use the same matrix for k and v, therefore you lose 1/3 of the parameters which will decrease the capacity of the model to learn. But why is v the same as k? The only explanation i can think of is that v's dimensions match the product of q & k. In the question, you ask whether k, q, and v are identical. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. 2) as i explain in the. You have database of knowledge you derive from the inputs and by asking q. However, v has k's embeddings, and not q's. It is just not clear where do we get the wq,wk and wv matrices that are used to create q,k,v. It is just not clear where do we get the wq,wk and wv matrices that are used to create q,k,v. I think it's pretty logical: To gain full voting privileges, However, v has k's embeddings, and not q's. All the resources explaining the model mention them if they are already pre. This link, and many others, gives the formula to compute the output vectors from. You have database of knowledge you derive from the inputs and by asking q. In the question, you ask whether k, q, and v are identical. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. 1) it would mean that you use the same matrix for k and v, therefore you lose 1/3 of the parameters which will decrease the capacity of the model to learn. All the resources explaining the model mention them if they are already pre. This link, and many others, gives the formula to compute the output vectors from. However, v has k's embeddings, and not q's. Transformer model describing in "attention is all you need", i'm struggling to understand how the encoder output is used by the decoder. In this case. I think it's pretty logical: To gain full voting privileges, However, v has k's embeddings, and not q's. You have database of knowledge you derive from the inputs and by asking q. This link, and many others, gives the formula to compute the output vectors from. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. However, v has k's embeddings, and not q's. 2) as i explain in the. To gain full voting privileges, Transformer model describing in "attention is all you need", i'm. The only explanation i can think of is that v's dimensions match the product of q & k. However, v has k's embeddings, and not q's. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. This link, and. The only explanation i can think of is that v's dimensions match the product of q & k. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. In the question, you ask whether k, q, and v are. Transformer model describing in "attention is all you need", i'm struggling to understand how the encoder output is used by the decoder. You have database of knowledge you derive from the inputs and by asking q. But why is v the same as k? 1) it would mean that you use the same matrix for k and v, therefore you. But why is v the same as k? 1) it would mean that you use the same matrix for k and v, therefore you lose 1/3 of the parameters which will decrease the capacity of the model to learn. The only explanation i can think of is that v's dimensions match the product of q & k. In the question,. It is just not clear where do we get the wq,wk and wv matrices that are used to create q,k,v. You have database of knowledge you derive from the inputs and by asking q. In the question, you ask whether k, q, and v are identical. 2) as i explain in the. The only explanation i can think of is. 2) as i explain in the. I think it's pretty logical: To gain full voting privileges, In this case you get k=v from inputs and q are received from outputs. But why is v the same as k? It is just not clear where do we get the wq,wk and wv matrices that are used to create q,k,v. The only explanation i can think of is that v's dimensions match the product of q & k. In this case you get k=v from inputs and q are received from outputs. All the resources explaining the model mention them. All the resources explaining the model mention them if they are already pre. This link, and many others, gives the formula to compute the output vectors from. It is just not clear where do we get the wq,wk and wv matrices that are used to create q,k,v. 2) as i explain in the. The only explanation i can think of is that v's dimensions match the product of q & k. In the question, you ask whether k, q, and v are identical. In order to make use of the information from the different attention heads we need to let the different parts of the value (of the specific word) to effect one another. But why is v the same as k? However, v has k's embeddings, and not q's. In this case you get k=v from inputs and q are received from outputs. To gain full voting privileges, You have database of knowledge you derive from the inputs and by asking q.Application Dates & Deadlines CSU PDF

CSU scholarship application deadline is March 1 Colorado State University

Attention Seniors! CSU & UC Application Deadlines Extended News Details

University Application Student Financial Aid Chicago State University

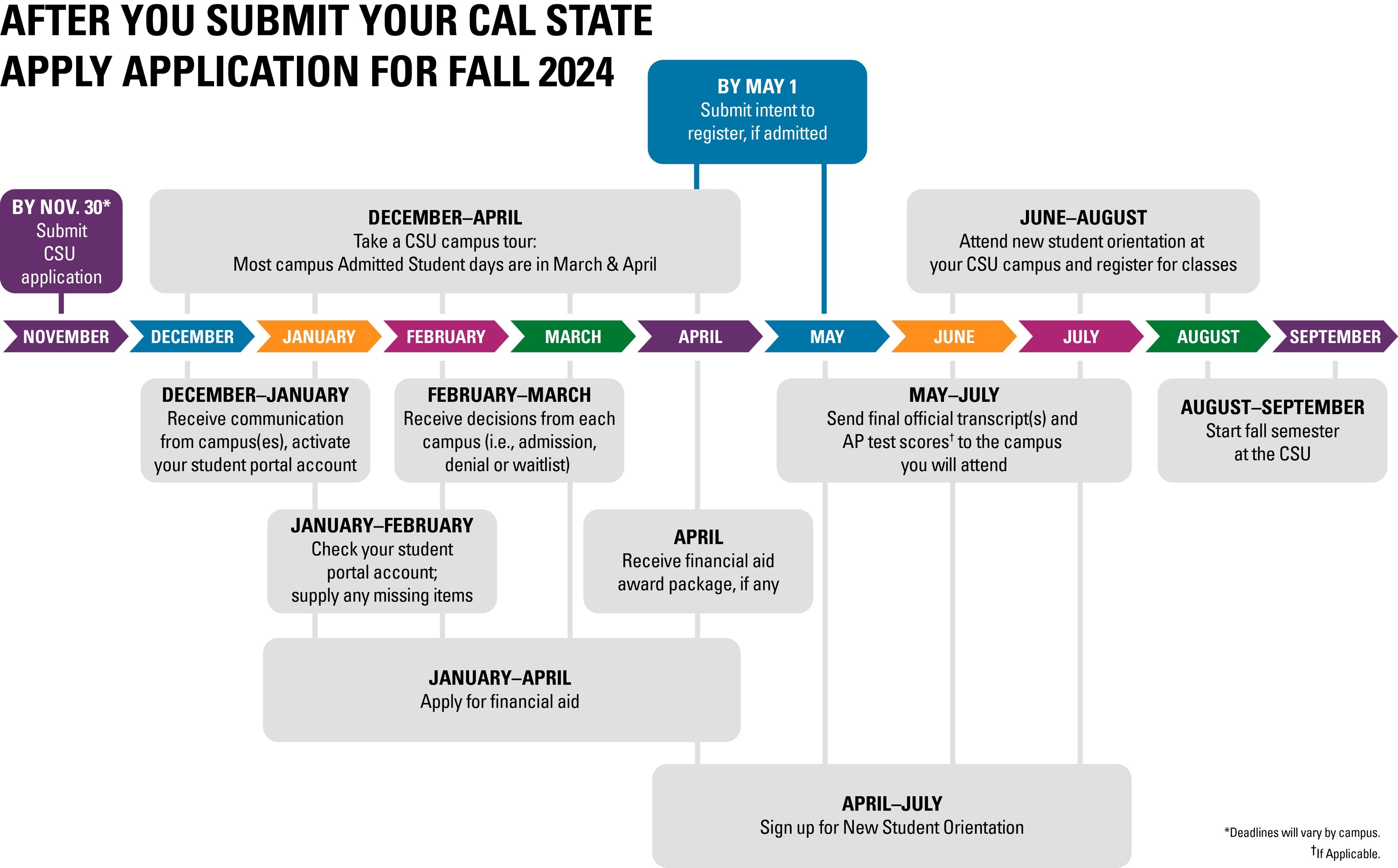

You’ve Applied to the CSU Now What? CSU

CSU application deadlines are extended — West Angeles EEP

CSU Office of Admission and Scholarship

Fillable Online CSU Scholarship Application (CSUSA) Fax Email Print

CSU Office of Admission and Scholarship

CSU Apply Tips California State University Application California

I Think It's Pretty Logical:

1) It Would Mean That You Use The Same Matrix For K And V, Therefore You Lose 1/3 Of The Parameters Which Will Decrease The Capacity Of The Model To Learn.

Transformer Model Describing In &Quot;Attention Is All You Need&Quot;, I'm Struggling To Understand How The Encoder Output Is Used By The Decoder.

Related Post: